Long Short Term Memory

What is LSTM

- It is a type of Recurrent Neural Networks (RNN) architecture

- It is designed to address the vanishing/exploding gradient problem (see Optimization Algorithms#^4a1546) in traditional RNNs, allowing it to effectively capture long-term dependencies in sequential data.

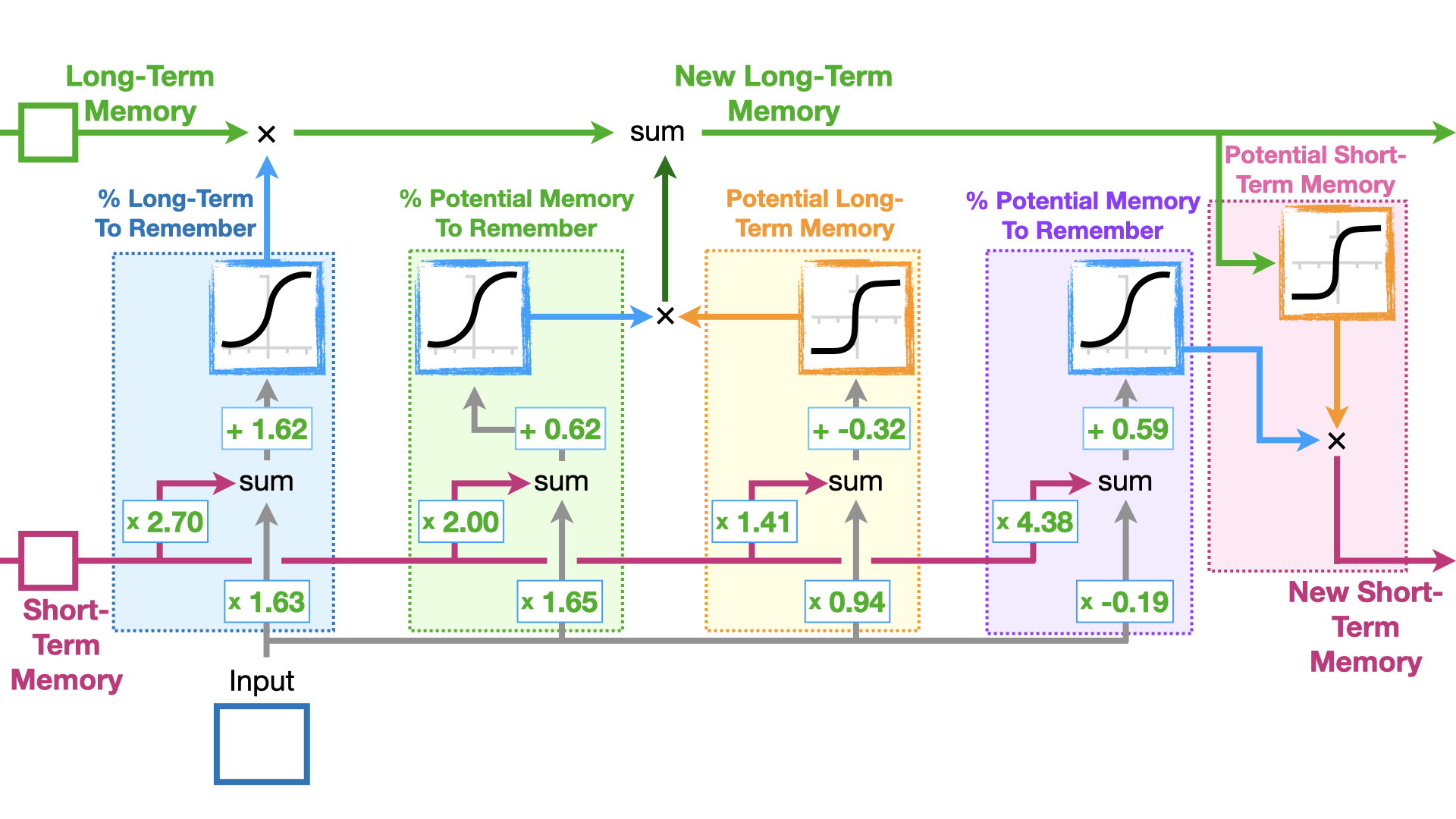

Long vs Short

- why "long": It is a unique structure with memory cells that can store and access information over long periods

- long-term memories = cell states: no weights, thus to avoid vanishing/exploding gradients

- short-term memories = hidden states: with weights, just as vanilla RNNs

LSTM units

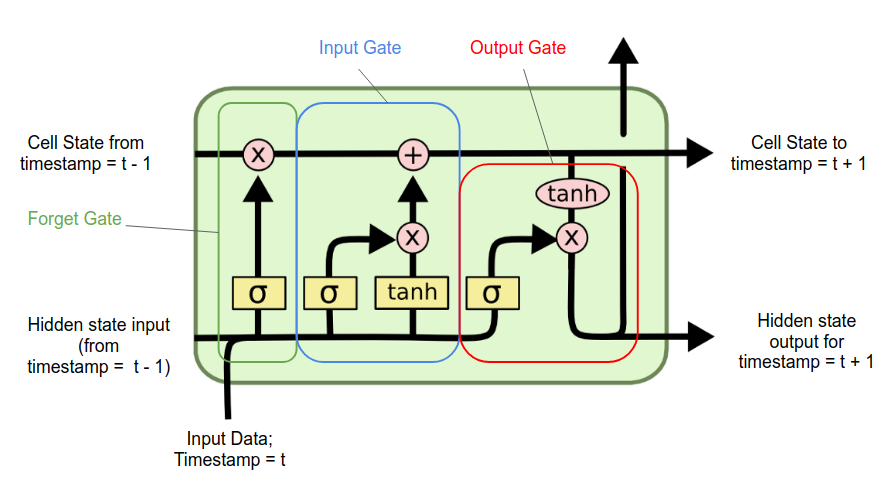

illustration figure 1

illustration figure 2

three key components

- input gates

- activation function is tanh (-1 to 1)

- even though this part determines how we should update the long-term memory....

- forget gates

- activation function is sigmoid (0 to 1)

- decides what percentage of the long-term memory is remembered

- output gates