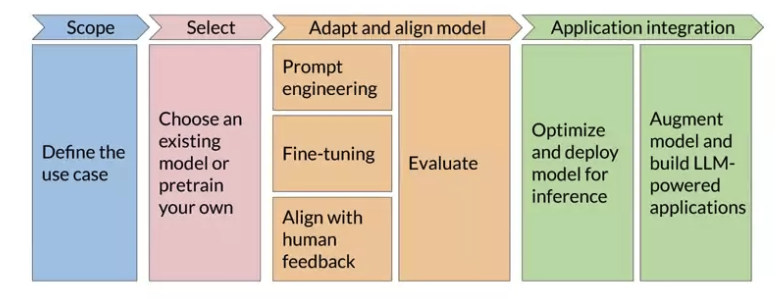

Generative AI Project Lifecycle

Generative AI project lifecycle

- Define the scope

- Choose an existing model (or pre-train a model)

- scaling choices for pre-training

- goal: maximize model performance

- constraints: compute budget

- scaling choice

- increase dataset size (number of tokens)

- increase model size (number of parameters)

- what is found: increasing training dataset size is more important than increasing model size

- scaling choices for pre-training

- Prompt engineering: Prompt Engineering

- Fine-tuning (supervised learning/supervised fine-tuning): LLM Finetuning

- Align with human feedback (safety tuning)

- goal: HHH = Helpfulness, Honesty, Harmlessness

- e.g.Reinforcement learning with human feedback (RLHF)

- Evaluation: Evaluate AI Model & System

- Model optimization and deployment:

- Model-level optimization: LLM Optimization (Pre-deployment, optimizing the model itself)

- Inference-level optimization: AI model Inference Optimization (Post-deployment, optimizing model serving and runtime)

- Augment model and build LLM-powered applications: LLM-powered Application